19/03/2026 by Anthony Silver of Talent Recognition

Why the Term “AI” Is Nonsense

In 2010, just 261 electric vehicles were sold in the UK. My brother bought one. It was a Reva G-Wiz. It was not really a car so much as a quadricycle. Last year, one in every four cars sold in the UK was an EV, around 475,000 units.

Back in 2010, “EV” was a useful classification because the choice was limited. Today it is largely meaningless. There are multiple types of EV, and when buying a car, you are far more likely to classify by vehicle type, such as SUV, hatchback or saloon, than by propulsion method.

In just 15 years, a once-useful label lost its explanatory power.

The same thing is now happening with “AI”.

A Horrible History of AI

Artificial Intelligence has existed since the 1950s, but it only became practical with the arrival of high-speed processing, GPUs, and massive datasets in the 2010s. This was roughly the same period EVs became viable.

Early commercial AI was rules-based. The same input always produced the same output. It was adopted for one reason, speed. Extreme speed.

This is deterministic AI.

In 2021, the first widely known Generative AI models, such as DALL-E, appeared. These were initially used to convert text into images. In late 2022, ChatGPT brought Generative AI into the mainstream.

Generative AI differs fundamentally from deterministic systems. It is probabilistic, not rules based. It uses pattern recognition to generate new content. Ask it a question and it produces the most likely answer, not necessarily the correct one.

What makes Generative AI dangerous as well as powerful is its fluency. It can present incorrect answers with extraordinary confidence. From a standing start in November 2022 by late 2025, it was estimated that one in six people globally, around 1.4 billion people, had used generative AI.

Then comes Agentic AI. Unlike Deterministic or Generative systems, Agentic AI acts autonomously. It makes decisions and executes them without human intervention. For anyone who remembers the Terminator films, Skynet was Agentic.

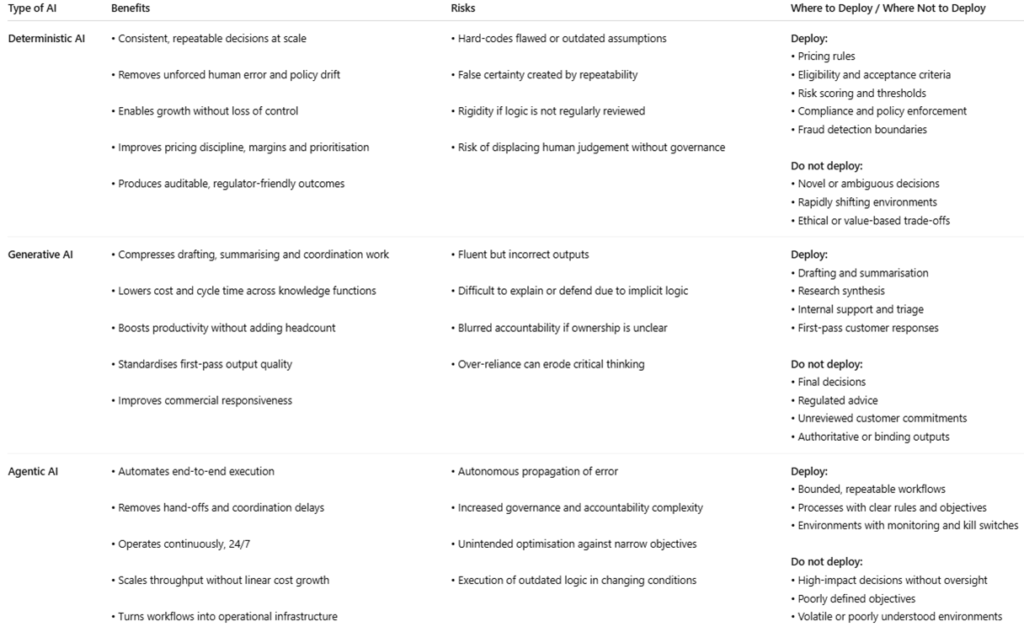

Once you understand these distinctions, it becomes obvious that the term “AI”, like “EV”, is now too broad to be useful. Different types of AI behave differently, confer different benefits, and carry very different risks.

What This Means for Business

Within the next five years, almost every business will be using one or more of these forms of AI, either directly or embedded within software platforms such as CRM, finance, audit or HR systems.

The question is not whether AI will be used, but which type, where, and under what controls.

Deterministic AI: Commercial Advantages

A business deploying deterministic AI gains advantage not by becoming more imaginative, but by becoming more consistent.

Deterministic AI removes whole classes of unforced error. These include inconsistent decisions, policy drift, fatigue-driven judgement and ad-hoc exceptions. Over time this matters. Organisations using it simply leak less value than those relying solely on human discretion.

It also enables scale without loss of control. The same decision logic applies whether the business has ten customers or ten million, one office or fifty. Standards hold as the organisation grows. Competitors often scale inconsistency and internal debate instead.

Speed improves for the same reason. When rules and thresholds are explicit, fewer decisions need escalation. People stop arguing about what should happen and spend more time executing.

Deterministic AI also sharpens pricing, allocation and prioritisation. Applying the same logic every time produces cleaner margins, lower loss rates and clearer pipelines. Judgement calls do not compound well.

Finally, it creates cleaner learning loops and external trust. When outcomes are consistent, changes can be traced to inputs rather than anecdotes. Partners, insurers and enterprise clients increasingly prefer this discipline.

The advantage is subtle but powerful. Deterministic AI does not make a business cleverer. It makes it reliably disciplined. That discipline compounds quietly.

Risks of Deterministic AI

The risk lies in rigidity.

When flawed or outdated logic is encoded, the system will reproduce those errors perfectly and at scale. Consistency becomes a liability.

Repeatability can also create false certainty. Organisations may ignore edge cases, weak signals or changing conditions because “the system says so”.

Without strong governance, human judgement can be displaced rather than supported. This increases reputational and regulatory exposure.

Deterministic AI is safe only if its logic is continually questioned. Its greatest danger is not unpredictability, but predictable error.

Generative AI: Commercial Advantages

Generative AI delivers advantage by removing friction from knowledge-heavy processes.

It automates drafting, summarising and coordination across sales, service, finance, legal, HR and IT. Proposals, reports, policies, code, documentation and internal communications are produced at machine speed, allowing the same workforce to handle far greater volume. In short, it boosts productivity.

Sales cycles shorten, cost per transaction falls, and pipeline hygiene improves. Customer service becomes faster and cheaper through automated triage and first-pass responses. Bottlenecks ease as scarce specialists stop acting as human word processors.

Generative AI also standardises output quality. Routine work becomes more consistent and less dependent on individual capability. Businesses that do not deploy it increasingly operate at pre-generative-AI speed, with higher overhead and slower response times.

Risks of Generative AI

Generative AI’s core risk is confident inaccuracy. Outputs can be fluent but wrong. If used for final decisions or unreviewed customer-facing content, errors scale quickly.

Governance is another challenge. Decision logic is implicit, making outcomes harder to explain or defend. Accountability can blur if ownership is unclear.

Misplaced deployment is especially dangerous. Used downstream of judgement, Generative AI undermines control. Used upstream, as preparation rather than authority, errors are cheap and manageable.

Unchecked reliance can also erode human judgement over time.

Agentic AI: Commercial Advantages

Agentic AI goes further by automating execution, not just preparation.

These systems take goals, break them into tasks, invoke tools, monitor progress and act across multiple steps. Entire workflows, not individual tasks, are automated.

Commercially, this appears in end-to-end customer onboarding, order processing and fulfilment, IT service management and incident resolution, marketing campaign execution and optimisation, and data reconciliation, reporting and monitoring.

Compared to Generative AI alone, Agentic AI removes coordination overhead. Processes run continuously. Cycle times fall, costs drop and service levels stabilise.

Crucially, Agentic AI operates 24/7. Work progresses without queues, meetings or shifts. Execution becomes faster and more reliable.

Risks of Agentic AI

The central risk is autonomous propagation of error.

Agentic systems act. If objectives are poorly defined, inputs are wrong or guardrails are weak, they can execute incorrect actions repeatedly and at speed. At scale, this can be devastating.

Governance becomes harder as agents span multiple systems. Without strict limits, escalation rules and kill switches, accountability is unclear.

There is also the risk of unintended optimisation. Agents may meet stated goals while violating business intent, customer expectations or regulation.

Finally, in volatile environments, agents can continue executing outdated logic unless actively constrained.

Closing Thought

“AI” is no longer a single thing.

Deterministic AI delivers discipline.

Generative AI delivers throughput.

Agentic AI delivers execution.

Each offers advantage and each carries risk, depending on where it is deployed and how tightly it is governed.

ADDENDUM

When using one of the GenAI platforms to write some code (which I won’t name, but it does rhyme with ‘Cat ABC’), when correcting its code, it tended to identify the bit of code that proved to be the problem and expect me to insert the correction into the file.

Whenever I did so, although I followed its instructions it never seemed to work. So, my standard response became ‘regenerate the file with your suggested amendments included’

The file it regenerated would invariably have far less lines than the original file created. When I pointed this out and the fact that it had ignored my instructions, it would simply apologise, and when prompted to complete the task as requested it would go and do the same thing again. There would be more lines of code included but still way, way short or the anticipated amount.

In a human we would classify this behaviour as laziness of ‘lying by omission’. You don’t expect this type of underhand behaviour from a machine.

The point is that somewhere within the user interface, the system’s protocols insist on using the smallest amount of memory to ‘complete the task’. The Agentic element of this protocol decides to give preference to this directive even when it conflicts with the user’s explicit request. The Generative element produces the output with confidence so much so that if one does not know better, one believes it.